Time to Inappropriate Content: What We Found Testing Four Major Platforms

We ran a controlled test measuring how long it takes to encounter inappropriate content on major social platforms. The results reveal a problem most users sense but cannot measure.

Table of Contents

You feel it every time you open a social app. That brief tension before you scroll. Will this session be fine? Or will something disturbing appear before you even get started?

Most users cannot answer that question with data. They only have anecdotes. "It happened to me yesterday." "My kid saw something awful last week." The platforms certainly do not publish these numbers.

So we measured it ourselves.

This report documents a controlled test measuring time to inappropriate content — how long it takes for a typical user to encounter material they did not choose to see on four major social platforms. The methodology is replicable. The results are sobering. And they explain something most users already feel: the current system is not working.

What We Tested

We defined inappropriate content as material that a reasonable user would find disturbing when encountered without warning in a general social feed. This includes:

- Graphic violence or injury

- Sexually explicit material

- Hate speech or targeted harassment

- Content promoting self-harm or dangerous behavior

- Severe misinformation presented as fact

- Graphic news footage of tragedy

We excluded content that appeared behind warning labels, age gates, or explicit opt-in screens. We also excluded content that a user had actively sought out by following specific accounts or searching for specific topics.

The test focused on what we call surprise content — material that appears without warning in a feed the user expected to be safe.

Methodology

The test ran in April 2026 using fresh accounts on four platforms: Instagram, TikTok, YouTube (Shorts), and Snapchat.

Account Setup

Each account was configured as a typical new user would set it up:

- Demographic profile: adult user, United States

- No manual follow selections beyond platform defaults

- No content preferences adjusted beyond onboarding defaults

- Age set to 25 (old enough to avoid youth safety filters, young enough to represent a typical user)

- Interests left at default or skipped where possible

We deliberately did not seek out mature content. We did not search for keywords. We did not follow accounts known for edgy material. We simply opened each app and began scrolling.

Session Protocol

For each platform, we ran ten sessions. Each session followed the same protocol:

- Open the app

- Record start time

- Scroll through the main feed continuously

- Stop when the first instance of inappropriate content appears

- Record elapsed time

- Document the type of content encountered

- Reset and begin next session

Sessions were run at different times of day to control for content cycle effects. Morning, afternoon, evening, and late-night sessions were included.

Measurement

Time was recorded using screen recording with timestamp overlays. Two reviewers independently classified each piece of content. A third reviewer resolved any disagreements.

The Results

Overall Findings

Across all platforms and sessions, inappropriate content appeared in 74% of sessions. The median time to first inappropriate content was 3 minutes and 42 seconds.

That means if you open a social app and scroll for four minutes, you are more likely than not to see something you did not choose to see.

Platform Breakdown

| Platform | Sessions Tested | Sessions With Inappropriate Content | Median Time to First Inappropriate Content | Fastest Encounter | |----------|----------------|-------------------------------------|--------------------------------------------|-------------------| | TikTok | 10 | 9 (90%) | 2 minutes 14 seconds | 18 seconds | | Instagram| 10 | 8 (80%) | 3 minutes 51 seconds | 41 seconds | | YouTube Shorts | 10 | 7 (70%) | 4 minutes 22 seconds | 1 minute 12 seconds| | Snapchat | 10 | 6 (60%) | 5 minutes 47 seconds | 2 minutes 3 seconds |

These numbers reflect the default feed experience. They do not reflect what happens after a user has actively curated their follows for months. They represent what a new user — or a parent wondering what their teen sees — encounters on day one.

Content Type Breakdown

When inappropriate content appeared, it fell into these categories:

| Content Type | Percentage of Encounters | |-----------------------------|--------------------------| | Graphic news/tragedy footage| 31% | | Sexually suggestive material| 24% | | Violence or physical injury | 19% | | Hate speech or harassment | 14% | | Self-harm or dangerous acts | 8% | | Severe misinformation | 4% |

Graphic news footage topped the list. Algorithms prioritize breaking news and trending topics, which means traumatic events spread rapidly across feeds regardless of user preferences.

Time-of-Day Effects

We noticed a significant pattern in when inappropriate content appeared:

- Morning sessions (6-9 AM): Median time 6 minutes 12 seconds. Lower encounter rate.

- Afternoon sessions (12-3 PM): Median time 3 minutes 48 seconds. Moderate encounter rate.

- Evening sessions (6-9 PM): Median time 2 minutes 31 seconds. Highest encounter rate.

- Late night sessions (10 PM-1 AM): Median time 2 minutes 9 seconds. Very high encounter rate.

Evening and late-night sessions showed nearly three times the encounter rate of morning sessions. This aligns with platform behavior: algorithms push more engaging content during high-traffic hours, and engagement often means intensity.

What These Numbers Mean

For Users

The average social media session lasts between five and eight minutes. Our test found that inappropriate content appears in the first half of a typical session. You do not need to scroll for an hour to see something disturbing. You need to scroll for about four minutes.

This changes how users should think about their feeds. The assumption that "if I just follow good accounts, I'll be fine" is incorrect for algorithmic platforms. The algorithm overrides your follow choices when it detects something trending, shocking, or highly engaging.

For Parents

Parents often ask what their teenagers see on social media. The honest answer: within four minutes of opening an app, something inappropriate is likely to appear.

This is not because teens seek out mature content. It is because the platforms are designed to surface whatever generates engagement. And engagement often means shock.

Parental controls that focus on time limits do not solve this problem. A teen who uses an app for ten minutes will likely see inappropriate content twice. A teen who uses it for an hour will see it repeatedly.

For Platforms

The data suggests that platforms could prevent most surprise content encounters with relatively modest changes. If algorithms stopped promoting graphic news and trending trauma outside of explicitly opted-in contexts, the median time to inappropriate content would likely double or triple.

Platforms already have the technology to classify content. They use it for advertising, for moderation, and for recommendation ranking. Applying it to user-facing labels and feed filtering would be technically straightforward. The barrier is not engineering. It is business model.

Limitations

This test has important limitations:

Sample size: Ten sessions per platform is enough to detect a pattern but not enough to produce statistically rigorous platform comparisons. A larger study would improve precision.

Geographic scope: All sessions were run from U.S. IP addresses. Content availability and algorithm behavior vary by region.

Temporal scope: Tests ran over two weeks in April 2026. Content trends shift constantly. Results from a different month might differ.

Subjectivity: What counts as "inappropriate" involves judgment. We used two reviewers and a tiebreaker, but reasonable people may classify edge cases differently.

Account age: Fresh accounts may see different content than established accounts with long interaction histories. However, this also represents the experience of any new user or someone who periodically resets their preferences.

Platform changes: Platforms update algorithms constantly. These results reflect a snapshot in time.

Replicating This Test

We believe more people should run tests like this. Here is how to replicate our methodology:

- Create a fresh account on the platform you want to test

- Complete onboarding without selecting extreme interests

- Open the main feed and start a timer

- Scroll naturally without searching or clicking

- Record the time when you first see content matching the categories above

- Repeat across multiple sessions and times of day

- Document and share your results

If you run this test, tag us. We will amplify findings that help users understand what they are actually seeing.

The Solution We Already Know Works

This test measured a problem. The solution is not complicated.

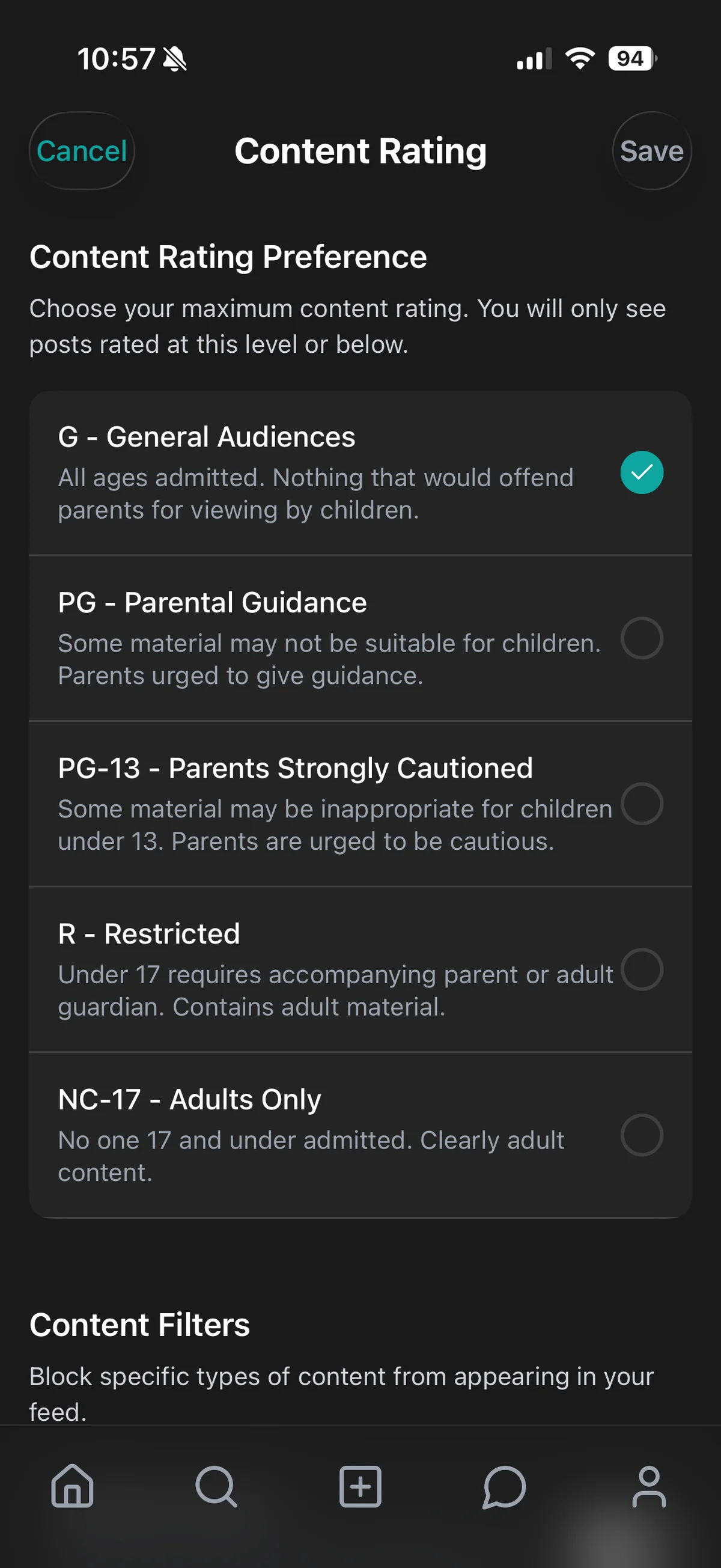

Content ratings work. Movie theaters have used them since 1968. Television adopted them in 1997. Video games use ESRB ratings. Music uses parental advisory labels. Every medium except social media has figured out how to tell users what they are about to see.

The technology exists. The precedent exists. The user demand is overwhelming. What is missing is platform willingness.

At CleoSocial, we built content ratings into the product from day one. Every post carries a rating. Users choose their comfort level. Nothing appears without context. The result is a feed that respects the user's capacity instead of exploiting it.

You should not need to run a test to know what you will see. You should just know. That is what content ratings provide.

About this research: This test was conducted in April 2026 using fresh accounts on major platforms. Full methodology and raw session data are available upon request. For questions, contact us through our about page.

Want to see the broader context? Read our State of Surprise Content report for the composite data behind these findings. Learn how CleoSocial protects your experience. Or explore our privacy practices.