A Proposal — Content Ratings for Social Media

Table of Contents

We have ratings for movies, television, music, and video games. These systems exist because we recognize that different people have different limits. Yet social media has remained a one-size-fits-all experience for over a decade. This is not because the problem is unsolvable. It is because the platforms that profit from engagement have no incentive to solve it.

I believe that is about to change. And I believe the solution is simpler than most people think.

What We Already Accept

When you walk into a theater, you know what you are getting. A G-rated film will be family friendly. An R-rated movie will have mature themes. The rating does not ban the content. It simply gives you information. You decide whether to watch.

This is not controversial. The MPAA rating system has existed since 1968. Television followed in 1997. Video games got the ESRB in 1994. Even music albums carry parental advisory labels. These systems are so normal that we barely notice them.

Social media is the only major medium without ratings. And it is the medium we spend the most time consuming. The average American now spends over seven hours per day looking at screens, with a significant portion of that on social platforms. We have created a world where the most consumed content is also the least labeled.

The Cost of Surprise Content

The absence of ratings has real consequences. Research from Pew and other organizations consistently finds that large majorities of users want more control over the content they see online. Yet most platforms offer little transparency about what appears in feeds.

The problem is not just that people see things they dislike. The problem is that they see things they are not prepared for. A teenager scrolling through fashion content encounters graphic self-harm imagery. A parent checking messages sees a traumatic news video autoplay in their feed. Someone recovering from anxiety is blindsided by rage-bait designed to trigger a reaction.

Research from organizations like the Center for Countering Digital Hate has found that new teen accounts on platforms like TikTok can be recommended self-harm and eating disorder content within minutes of signing up. Not because the teen searched for it. Because the algorithm decided it would keep them scrolling.

This is not a content moderation problem in the traditional sense. The content may not violate any platform rules. It is a discovery problem. Users have no way to know what is coming until it is already in front of them.

How a Rating System Would Work

A content rating system for social media does not need to be complex. It needs to be consistent, transparent, and user-controlled.

Here is a simple framework:

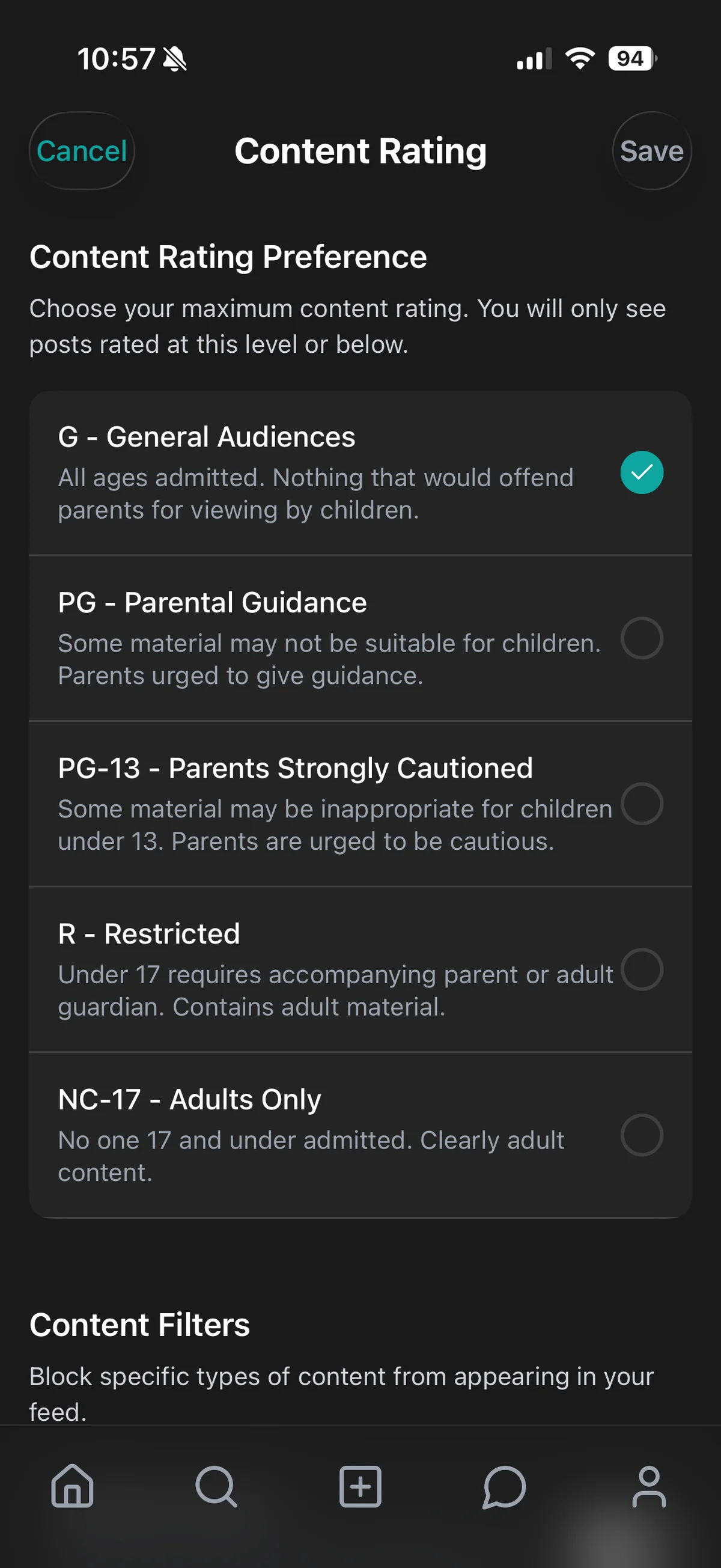

G — General Audiences. Safe for all ages. Positive, educational, or neutral content. No concerning language, no personal information shared publicly, no distressing themes.

PG — Parental Guidance Suggested. Mildly suggestive language or themes. A song recommendation with intense lyrics. A news story about a local controversy. Content that is not harmful but may require context for younger viewers.

PG-13 — Parents Strongly Cautioned. References to substance use, moderate violence, or mature themes. A post about a party where drinking is mentioned. A discussion of mental health struggles that does not include self-harm. Content that is appropriate for most teens but may concern younger children.

R — Restricted. Graphic violence, explicit content, or intense emotional distress. Real-world trauma footage. Self-harm indicators. Content that should only be viewed by adults who have chosen to see it.

These labels would appear on every post before a user engages. Not after. Not hidden in a settings menu. Visible at the point of decision.

This Is Not Censorship

The most common objection to content ratings is that they lead to censorship. This misunderstands the distinction between labeling and removing.

Censorship means content disappears. No one can see it. Ratings mean content stays visible but carries information about its nature. Users decide whether to engage.

Think of movie ratings again. An R-rated film is not banned. It simply carries a label so viewers know what to expect. Adults can still watch it. Parents can decide whether their teenagers are ready. The same principle applies to social media.

A rating system protects free expression by making it sustainable. When users can choose what they see, they are less likely to demand that platforms remove content they dislike. Ratings create space for difficult conversations without forcing them on unwilling participants.

Who Decides the Ratings?

Scale is the obvious challenge. Social media platforms host billions of posts. How could anything be rated accurately?

The answer is a combination of three approaches.

AI analysis. Modern language models can analyze text and images for tone, context, and risk factors with high accuracy. At CleoSocial, we use AI to provide an initial rating for every post. This is not perfect, but it is far better than no rating at all.

Community input. Users can suggest rating changes, similar to how review sites work. When enough users agree on a rating, it becomes the default label. This brings the wisdom of crowds to content labels.

Human review for disputes. When a rating is contested, human moderators review the content and make a final determination. Over time, the ratings become more accurate as the system learns from community feedback.

This three-layer approach is scalable, transparent, and resilient.

What Changes for Parents

Parents currently face an impossible choice. They can allow full access to apps designed to maximize engagement, or they can ban the apps entirely and isolate their children socially.

Content ratings create a middle ground. Families can set content preferences together. A parent might allow content rated as moderate but restrict more intense material. Teens can request changes as they mature. This builds trust instead of surveillance.

When teens understand the rating system, they can participate in setting their own boundaries. It becomes a conversation rather than a lockdown. Digital literacy replaces parental panic.

What Changes for Adults

Parents are not the only ones who benefit. Adults also deserve control over what enters their minds.

Imagine setting your feed to "PG" during a stressful workweek. Imagine choosing to see only "G" content on a Sunday morning. Imagine knowing that you will not be blindsided by graphic footage while checking messages at lunch.

This is not about avoiding reality. It is about choosing when and how to engage with difficult content. An informed citizen does not need to see every traumatic video to understand what is happening in the world. They need the choice to engage with depth when they are ready.

What Changes for Platforms

The platforms that adopt ratings first will gain a significant competitive advantage.

Users are already leaving major platforms over mental health concerns. The American Psychological Association's 2023 health advisory on social media use in adolescence warned about the risks of excessive exposure to harmful online content. Parents are searching for alternatives. Teens are tired of the endless scroll.

A rating system is a differentiator. It signals that a platform respects its users. It reduces liability by giving users control. It creates a healthier advertising environment where brands do not have to worry about their content appearing next to graphic violence.

The question is not whether content ratings will come to social media. It is which platforms will lead and which will follow.

A Call for Standards

The internet has standards for many things. We have HTTP for transferring data. We have WCAG for accessibility. We have GDPR for privacy in Europe. What we do not have is a standard for content intensity.

I am proposing that we create one.

A universal content rating standard for social media would work like this:

-

A shared vocabulary. Every platform uses the same labels: G, PG, PG-13, R. Users learn the system once and apply it everywhere.

-

Interoperable user preferences. Users set their rating threshold once, and it applies across every platform they use. No more configuring ten different apps.

-

Transparent rating criteria. Every platform publishes exactly what each rating means. No hidden rules. No black boxes.

-

User override. Adults can always choose to view content above their default threshold. The system informs, it does not restrict.

This standard would not require government regulation. It could emerge from industry consensus, driven by user demand and competitive pressure. The platforms that adopt it will win the trust of the next generation.

What We Are Doing at CleoSocial

CleoSocial was built to prove that this system works.

Every post on our platform is rated G, PG, PG-13, or R. Users set their preferred threshold, and posts above that level are filtered from their feed. Parents can set stricter limits for teen accounts. Teens can set their own limits as they get older.

Our rating system combines AI analysis with community input. When users disagree with a rating, they can suggest a change. Over time, the community shapes the standards together.

We are not perfect. We are early. But we are showing that a rated social network is possible — and that people want it.

The Bottom Line

Social media has grown faster than its systems for user protection. We have ratings for every other major medium, but not for the content we scroll through every day. This gap is not accidental. It is profitable for the platforms that benefit from engagement at any cost.

That model is failing. Users are burned out. Parents are frustrated. Regulators are circling. The platforms that survive will be the ones that give users real control.

Content ratings are not a complete solution. They do not fix the attention economy, the ad model, or the privacy problems. But they are a necessary first step. They give users information. They create choice. They make the internet feel less like a jungle and more like a place where informed adults can make their own decisions.

The future of social media should include more transparency, not less. A rating standard is one step toward a healthier relationship with the time we spend online.

We invite every platform, every regulator, and every user who believes in this vision to join the conversation. The technology exists. The demand exists. All that remains is the will to build it.