Why I Built a Social Network That Doesn't Want Your Attention

Table of Contents

I was born in 1977 into a house where the news was always on. My dad was a TV anchorman, which meant I grew up watching the world unfold in real time — wars, natural disasters, political upheaval. There were no content warnings. No filters. No way to turn it off without leaving the room.

Back then, we had no choice but to watch.

I used to think that made me informed. Tough, even. But looking back, I realize I was absorbing trauma before I had the tools to process it. The world felt more dangerous than it actually was. My brain learned to stay on high alert, scanning for threats that weren't there. Psychologists now call this "Mean World Syndrome" — the belief that the world is far more violent and hostile than reality supports, shaped by constant exposure to graphic media.

I carried that wiring into adulthood. And then social media arrived.

The Night I Realized Social Media Was Broken

I remember the exact moment everything clicked. I was sitting on my couch, scrolling through a popular app after a long day. Thirty minutes later, I felt worse than when I started.

I had seen a traumatic news story I never chose to see. Three ads for things I did not need. A heated argument between strangers that left me agitated. And somewhere in that scroll, graphic footage that shook me — content I had not consented to, appearing without warning in a feed that was supposed to be about friends and hobbies.

I put the phone down and sat in the quiet for a minute. The product wasn't the app. The product was my attention. And it was being sold to the highest bidder without any regard for my mental state.

That was the night I decided to build something different.

The Problem Is Not Addiction. It's Unchecked Content.

Most conversations about social media focus on screen time. How many minutes did you spend? Did you hit your limit? But limiting time is a blunt tool for a delicate problem.

If you spend thirty minutes reading helpful advice or catching up with a close friend, you feel refreshed. If you spend ten minutes looking at graphic violence or political rage, you feel drained. The time didn't change. The content did.

The real issue is unchecked content — raw, unrated, often shocking material that fills our feeds without warning. Algorithms prioritize what gets a fast reaction, and nothing makes us react faster than fear or anger. So the most provocative content rises to the top, regardless of whether we are emotionally prepared for it.

I realized that if I wanted a safer space for my own family, I would have to be the one to build it. Not because I wanted to start a company. Because I couldn't find what I needed anywhere else.

Two Years of Asking Hard Questions

Building a social app is not easy. There were many days I wanted to quit. But the mission kept me moving forward.

We had to rethink every interaction from scratch. Does this button help the user, or does it just keep them trapped? Is this feature here because it adds value, or because it increases engagement? We asked those questions about every pixel.

The biggest challenge was replacing the infinite scroll. Infinite scroll is a design trick that prevents your brain from reaching a stopping point. It works like a slot machine, giving you just enough dopamine to keep looking for the next post. Most apps want to hide the exit sign. We wanted to make it easy to leave.

Instead of an endless feed, we built a system that respects your time. When you have seen the updates from your community and the topics you care about, the app tells you that you are all caught up. That small change — a clear endpoint — is revolutionary in an industry built on endlessness.

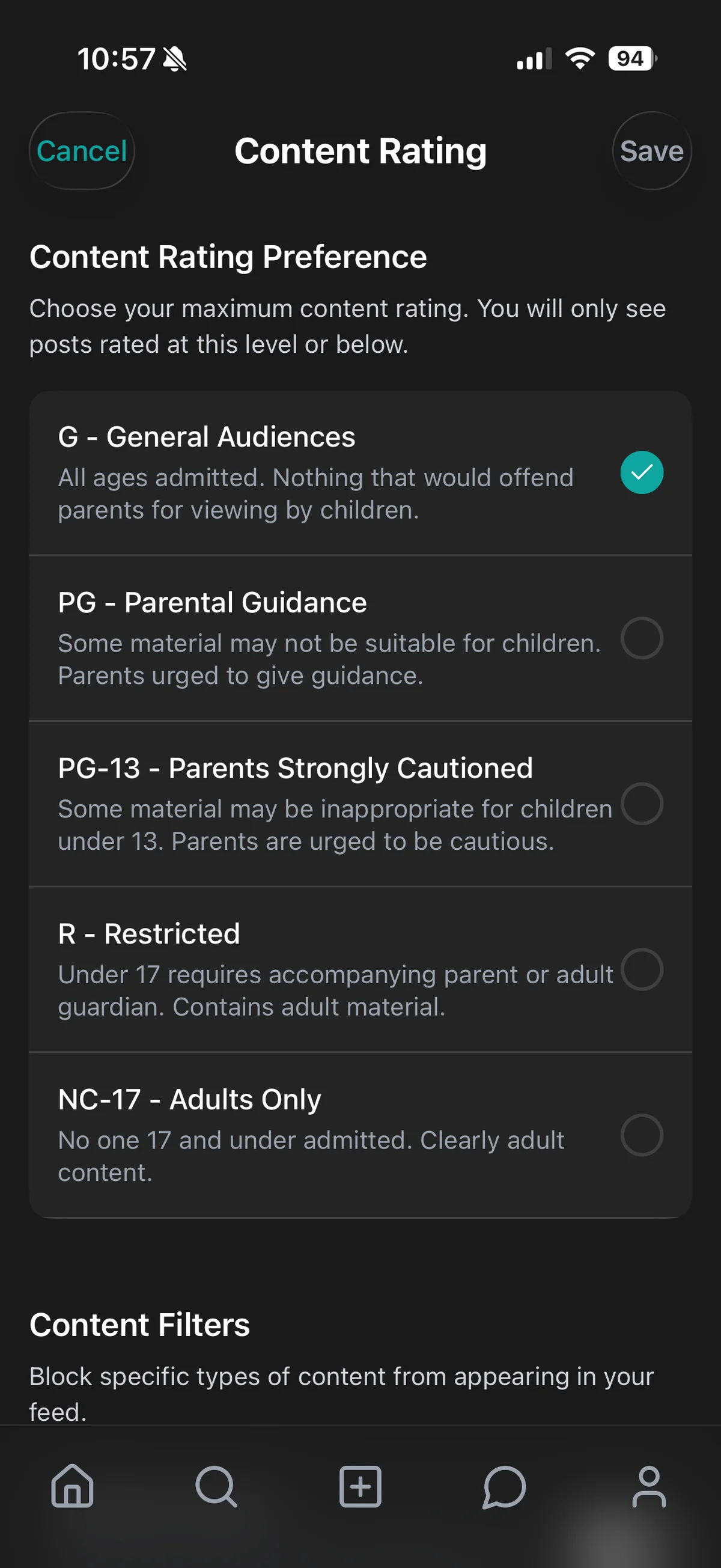

Content Ratings for a New Kind of Feed

If you go to a movie, you check the rating. If you buy a video game for your kids, you check the rating. Why don't we do this for the content we consume for hours every day?

We created a framework where every post is labeled G, PG, PG-13, or R. This puts the power back in your hands. You can set your feed to "PG" during the workday so you don't get distracted by intense topics. Parents can set their teen's account to "G" and "PG" and know the community and our AI are working together to enforce that boundary.

This isn't about telling people what they can or cannot see. It's about giving them the choice.

Building an AI and community-driven rating system that actually works took a significant portion of our development time. We had to ensure the ratings were accurate and that users felt they could trust the labels. That system is the backbone of CleoSocial. It is what makes us different from every other app on the market.

Why We Said No to Billionaire Funding

In the tech world, most people start by looking for big investors. I chose a different path.

When you take millions from venture capital firms, you are no longer in control of your mission. Their goal is profit at all costs, which usually means more ads and more addiction. By staying independent, we could stay true to our values. We didn't have to answer to a board of directors who wanted to know how many hours we could "extract" from our users.

This meant development took longer. We didn't have a team of a thousand engineers. But every line of code was written with the user's health in mind. We aren't building a unicorn company. We are building a sustainable movement. That independence is what allows us to say no to the toxic features other apps are forced to include.

Privacy as a Foundation, Not a Feature

You cannot have digital wellness without digital privacy. Most social media apps are actually data-harvesting companies. They track where you go, what you buy, and who you talk to so they can build a profile of you.

We built CleoSocial so that we collect the absolute minimum information needed to make the app work. We don't sell your data to third parties. We don't use it to target you with manipulative ads. Building a business model that doesn't rely on selling user data is difficult, but it is the right thing to do.

What We Built Together

We didn't just build an app. We built a community.

Our first members are more than users. They are founding partners in this movement. They help us test new features, give feedback on the rating system, and shape the culture of the platform. By growing slowly and intentionally, we have avoided the "troll culture" that plagues other platforms.

A social app is only as good as the people on it. We have spent these years fostering a space where people feel safe to be themselves without fear of being harassed. That community-first approach is what gives me hope for the future of the internet. It proves there is a hunger for something better, and that people are willing to support a platform that treats them with respect.

The Future of Intentional Connection

As AI-generated content becomes more common, the need for a verified, rated, and human-centric space will only grow. We are building the tools that will help people navigate the "post-truth" era of the internet with confidence.

We are just getting started. Our roadmap is filled with features that focus on local community building, mental health support, and digital literacy. We want CleoSocial to be the place where the next generation learns how to use technology in a way that enhances their life rather than consuming it.

If you are tired of the noise, the ads, and the unchecked content, I invite you to join us.

We built this for you.